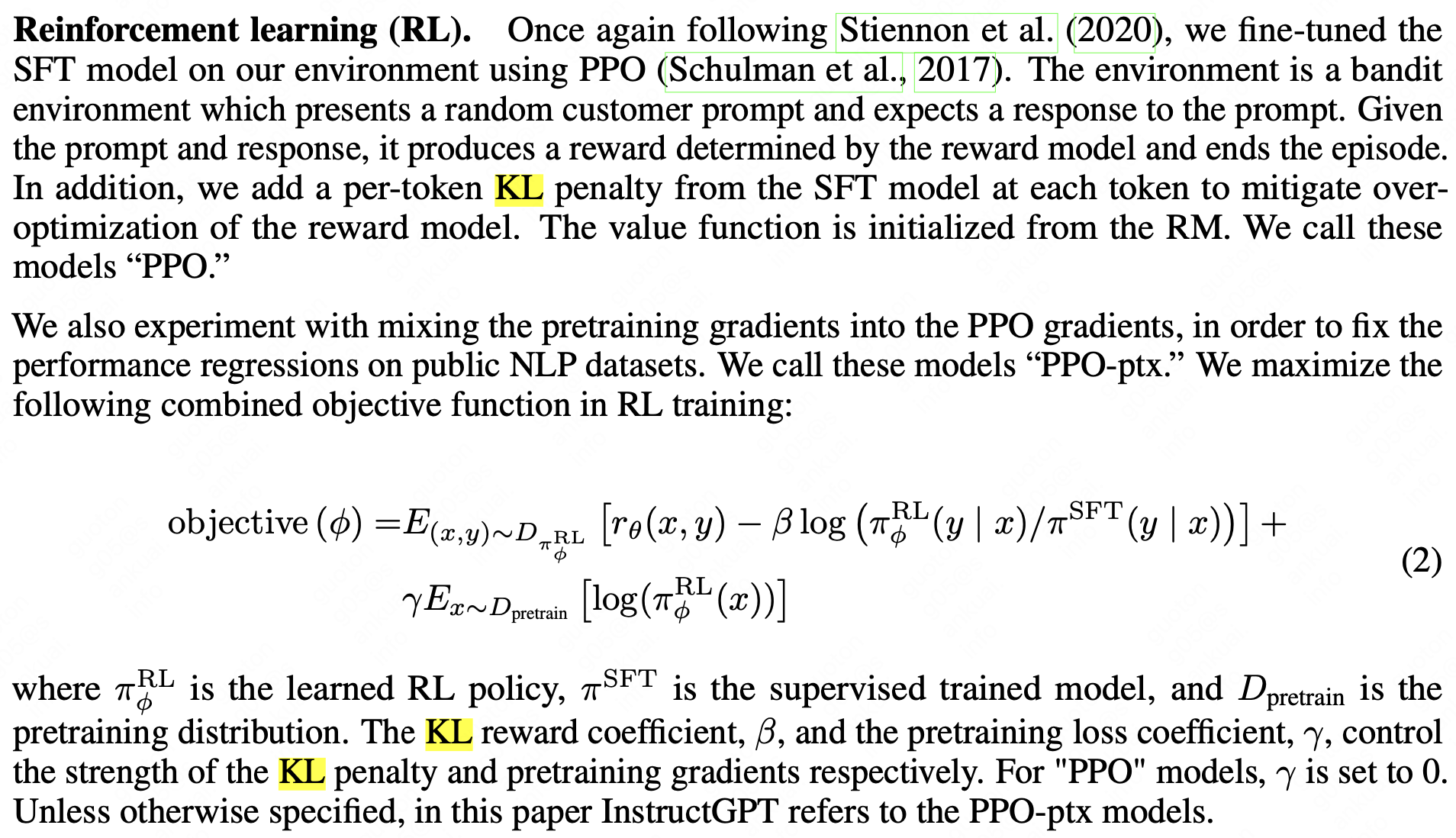

论文《Training language models to follow instructions with human feedback》所述:

用KL-loss如图应该就是计算Reward Model生成的句子和Policy Model生成的句子的差值,然后优化这个差值

微信扫一扫

论文《Training language models to follow instructions with human feedback》所述:

用KL-loss如图应该就是计算Reward Model生成的句子和Policy Model生成的句子的差值,然后优化这个差值