使用kubeadm方式搭建kubernetes集群

使用kubeadm方式搭建kubernetes集群

kubeadm

Kubeadm是一个K8s部署工具,提供kubeadm init 和kebuadm join,用于快速部署Kubernetes集群。可以通过两条指令完成一个Kubernetes集群的部署:

- 使用kubeadm init 创建一个Master节点

- 使用kubeadm join <Master节点IP和端口>将Node节点加入到当前集群中

最终目标

- 在所有节点安装Docker和kubeadm,kubelet,kubectl

- 部署Kubernetes Master

- 部署容器网络插件

- 部署Kubernetes Node,将节点加入Kubernetes集群

- 部署Dashboard Web页面,可视化查看Kubernetes资源

环境准备

| 角色 | IP |

|---|---|

| k8s-master | 192.168.1.16 |

| k8s-node1 | 192.168.1.30 |

| k8s-node2 | 192.168.1.31 |

安装

系统初始化

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

关闭selinux

sed -i 's/enforcing/disabled/' /etc/selinux/config && setenforce 0

关闭swap

# 临时关闭

swapoff -a

# 永久关闭,下次生效

sed -ri 's/.*swap.*/#&/' /etc/fstab

主机名

hostnamectl set-hostname <hostname>

在master添加hosts

cat >> /etc/hosts << EOF

192.168.1.16 k8s-master

192.168.1.30 k8s-node1

192.168.1.31 k8s-node2

EOF

将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

# 生效

sysctl --system

时间同步

yum install ntpdate -y

ntpdate time.windows.com

所有节点安装Docker/kubeadm/kubelet/kubectl

安装docker

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

yum -y install docker-ce-18.06.1.ce-3.e17

systemctl enable docker && systemctl start docker

docker --version

添加阿里云YUM软件源

设置仓库地址

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"]

}

EOF

向/etc/yum.repos.d/kubernetes.repo添加yum源

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

安装kubeadm,kubelet,kubectl

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet

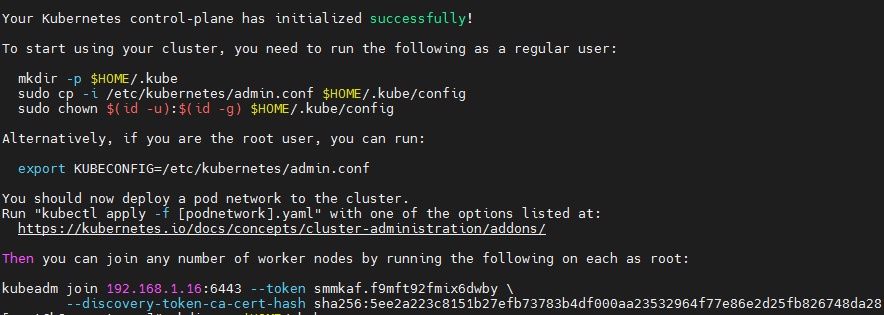

部署Kubernetes Master

kubeadm init \

--apiserver-advertise-address=192.168.1.16 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.1 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

参数说明:

–kubernetes-version v1.23.1 指定版本

–apiserver-advertise-address 为通告给其它组件的IP,一般应为master节点的IP地址

–service-cidr 指定service网络,不能和node网络冲突

–pod-network-cidr 指定pod网络,不能和node网络、service网络冲突

–image-repository registry.aliyuncs.com/google_containers 指定镜像源,由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址。

如果k8s版本比较新,可能阿里云没有对应的镜像,就需要自己从其它地方获取镜像了。

配置kubectl

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

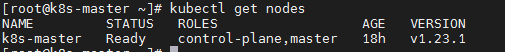

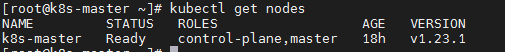

查看节点信息

kubectl get nodes

配置flannel

创建配置文件:

vim kube-flannel.yml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.245.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.14.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.14.0

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

执行yaml文件:

kubectl apply -f kube-flannel.yml

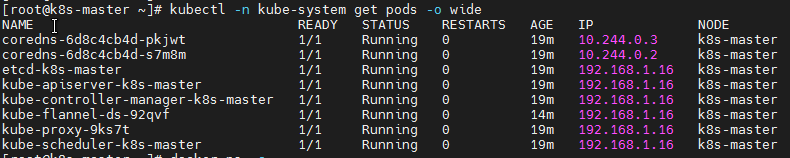

查看flannel部署结果:

kubectl -n kube-system get pods -o wide

查看node状态:

kubectl get nodes

部署Kubernetes Node

在192.168.1.30跟192.168.1.31上向集群添加新节点,执行在kubeadm init输出的kubeadm join命令:

kubeadm join 192.168.1.16:6443 --token smmkaf.f9mft92fmix6dwby \

--discovery-token-ca-cert-hash sha256:5ee2a223c8151b27efb73783b4df000aa23532964f77e86e2d25fb826748da28

测试Kubernetes集群

在Kubernetes中创建一个Pod,验证是否正常运行:

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port 80 --type NodePort

kubectl get pod,svc

访问地址:http://NodeIP:Port

安装过程出现的问题

升级系统内核

CentOS 7.x 系统自带的 3.10.x 内核存在一些 Bugs,导致运行的 Docker、Kubernetes 不稳定。

CentOS 允许使用 ELRepo,这是一个第三方仓库,可以将内核升级到最新版本。

要在 CentOS 7 上启用 ELRepo 仓库,请运行:

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

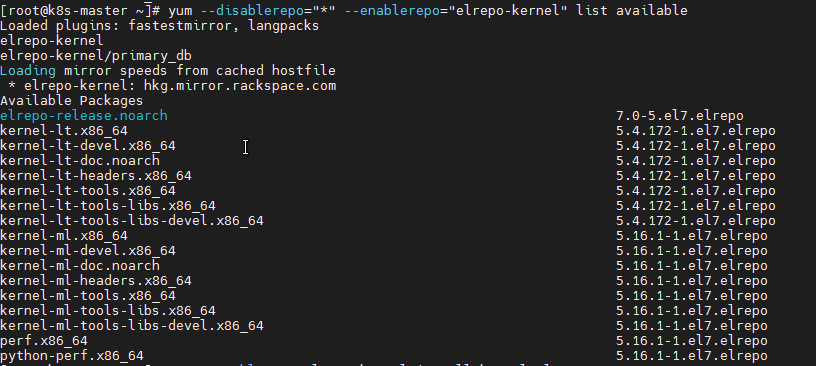

仓库启用后,你可以使用下面的命令列出可用的内核相关包:

yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

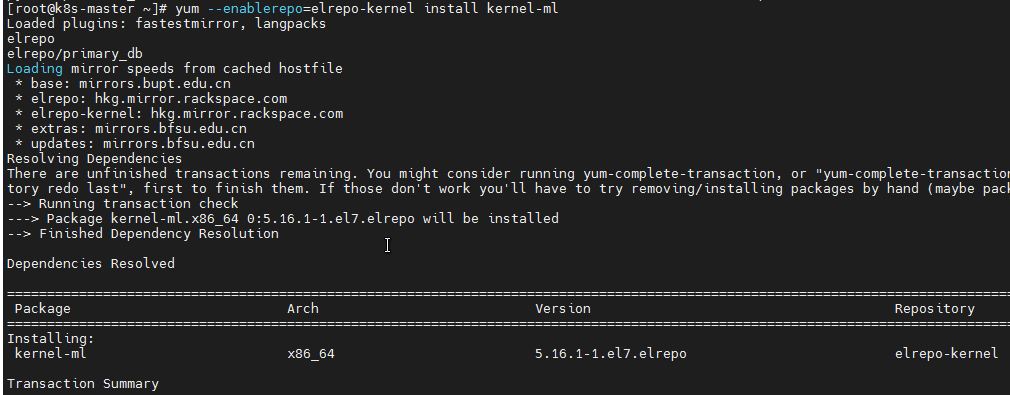

安装最新的主线稳定内核:

yum --enablerepo=elrepo-kernel install kernel-ml

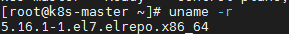

最后,重启机器并应用最新内核,接着运行下面的命令检查最新内核版本:

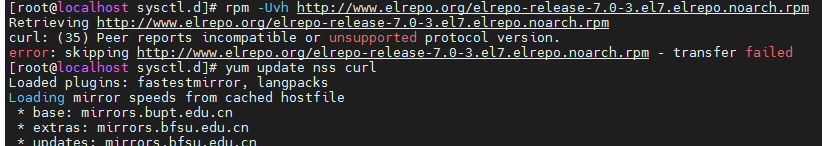

curl: Peer reports incompatible or unsupported protocol version

查找原因是curl,nss版本低的原因,解决办法就是更新nss,curl。

yum update nss curl

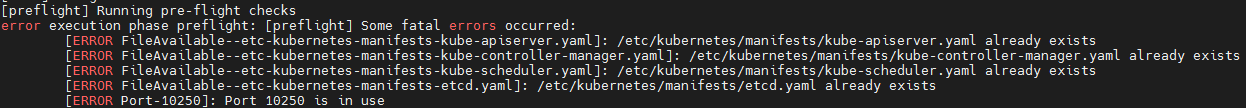

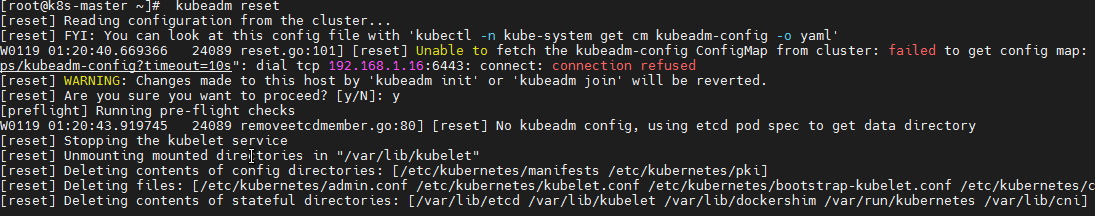

初始化 Kubernetes 问题(配置文件已存在)

kubeadm reset

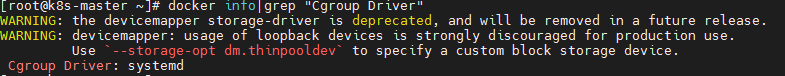

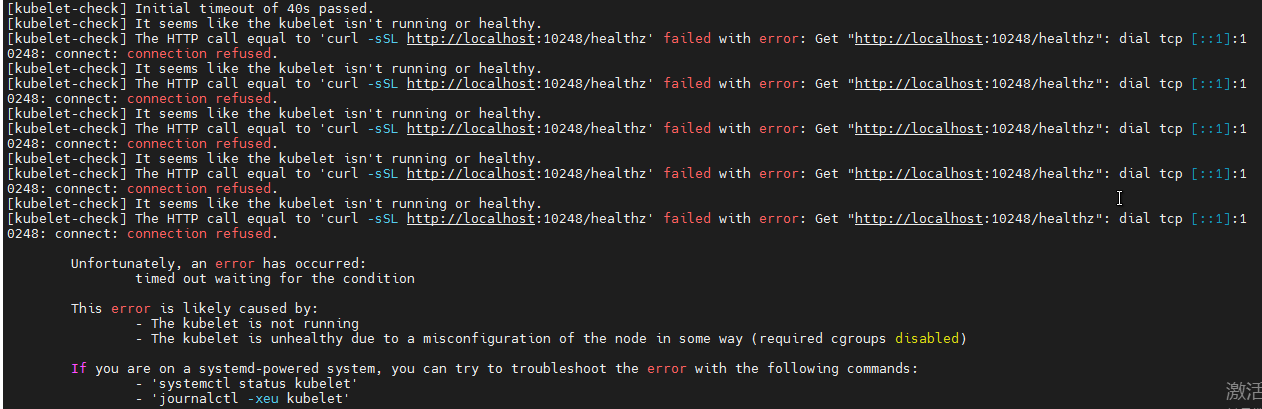

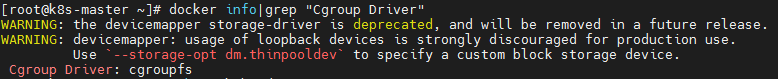

初始化 Kubernetes 问题(failed to run Kubelet: misconfiguration: kubelet cgroup driver: “cgroupfs” is different from docker cgroup driver: “systemd” )

初始化失败,提示kubelet 健康状态不正常。

查看 kubelet 状态 systemctl status kubelet,提示error: failed to run Kubelet: failed to create kubelet: misconfiguration: kubelet cgroup driver: “systemd” is different from docker cgroup driver: “cgroupfs”。

修改docker,只需在/etc/docker/daemon.json中,添加"exec-opts": [“native.cgroupdriver=systemd”]即可

{

"registry-mirrors":["https://docker.mirrors.ustc.edu.cn"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

修改kubelet:

cat > /var/lib/kubelet/config.yaml <<EOF

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

cgroupDriver: systemd

EOF

重启docker 与 kubelet:

systemctl daemon-reload

systemctl restart docker

systemctl restart kubelet

检查 docker info|grep “Cgroup Driver” 是否输出 Cgroup Driver: systemd: