本文是深度学习课程的实验报告

使用了MLP/LeNet/AlexNet/GoogLeNet/ResNet五个深度神经网络模型结构和MNIST、Fashion MNIST、HWDB1三个不同的数据集,所用的开发框架为tensorflow2。 本文的数据集和.ipynb文件可在此处下载

实验结果

实验结果如下表所示

模型在不同数据集上的准确度

MNIST | Fashion MNIST | HWDB1 | |

MLP | 97.76% | 87.73% | 84.17% |

LeNet | 98.68% | 85.82% | 91.33% |

AlexNet | 98.91% | 90.57% | 89.67% |

GoogLeNet | 99.27% | 90.27% | 91.50% |

ResNet | 99.21% | 91.35% | 93.67% |

导入相关库

import os

import warnings

import gzip

import numpy as np

import tensorflow as tf

import

环境设置

warnings.filterwarnings("ignore")

os.environ["TF_CPP_MIN_LOG_LEVEL"] = '2' # 只显示 warning 和 Error

os.environ['CUDA_VISIBLE_DEVICES'] = '-1' # 用CPU训练

logging.disable(30) # 不显示warning

加载mnist数据

def load_mnist():

with np.load(r'./datasets/mnist.npz', allow_pickle=True) as f:

x_train, y_train = f['x_train'], f['y_train']

x_test, y_test = f['x_test'], f['y_test']

return (x_train, y_train), (x_test, y_test)

加载fashion_mnist数据

def load_fashion_mnist():

dirname = os.path.join('datasets', 'fashion-mnist')

files = ['train-labels-idx1-ubyte.gz', 'train-images-idx3-ubyte.gz',

't10k-labels-idx1-ubyte.gz', 't10k-images-idx3-ubyte.gz']

paths = []

for fname in files:

paths.append(os.path.join(dirname, fname))

with gzip.open(paths[0], 'rb') as lbpath:

y_train = np.frombuffer(lbpath.read(), np.uint8, offset=8)

with gzip.open(paths[1], 'rb') as imgpath:

x_train = np.frombuffer(imgpath.read(), np.uint8,

offset=16).reshape(len(y_train), 28, 28)

with gzip.open(paths[2], 'rb') as lbpath:

y_test = np.frombuffer(lbpath.read(), np.uint8, offset=8)

with gzip.open(paths[3], 'rb') as imgpath:

x_test = np.frombuffer(imgpath.read(), np.uint8,

offset=16).reshape(len(y_test), 28, 28)

return (x_train, y_train), (x_test, y_test)

加载HWDB1数据

# 图像预处理/设置为24*24大小并归一化

def preprocess_image(image):

image_size = 24

img_tensor = tf.image.decode_jpeg(image, channels=1)

img_tensor = tf.image.resize(img_tensor, [image_size, image_size])

img_tensor /= 255.0 # normalize to [0,1] range

return img_tensor

def load_and_preprocess_image(path):

image = tf.io.read_file(path)

return preprocess_image(image)

def load_HWDB1():

root_path = './datasets/HWDB1'

train_images = []

train_labels = []

test_images = []

test_labels = []

temp = []

with open(os.path.join(root_path, 'train.txt'), 'r') as f:

for line in f:

line = line.strip('\n')

if line is not '':

imgpath = line

label = line.split('\\')[1]

train_images.append(imgpath)

train_labels.append(int(label))

for item in train_images:

img = load_and_preprocess_image(item)

temp.append(img)

x_train = np.array(temp)

y_train = np.array(train_labels)

temp = []

with open(os.path.join(root_path, 'test.txt'), 'r') as f:

for line in f:

line = line.strip('\n')

if line is not '':

imgpath = line

label = line.split('\\')[1]

test_images.append(imgpath)

test_labels.append(int(label))

for item in test_images:

img = load_and_preprocess_image(item)

temp.append(img)

x_test = np.array(temp)

y_test = np.array(test_labels)

return (x_train, y_train), (x_test, y_test)

定义mnist数据装载器

class MNISTLoader():

def __init__(self):

(self.train_data, self.train_label), (self.test_data,

self.test_label) = load_mnist()

# MNIST中的图像默认为uint8(0-255的数字)。以下代码将其归一化到0-1之间的浮点数,并在最后增加一维作为颜色通道

self.train_data = np.expand_dims(self.train_data.astype(

np.float32) / 255.0, axis=-1) # [60000, 28, 28, 1]

self.test_data = np.expand_dims(self.test_data.astype(

np.float32) / 255.0, axis=-1) # [10000, 28, 28, 1]

self.train_label = self.train_label.astype(np.int32) # [60000]

self.test_label = self.test_label.astype(np.int32) # [10000]

self.num_train_data, self.num_test_data = self.train_data.shape[

0], self.test_data.shape[0]

def get_batch(self, batch_size):

# 从数据集中随机取出batch_size个元素并返回

index = np.random.randint(0, self.num_train_data, batch_size)

return self.train_data[index, :], self.train_label[index]

定义fashion_mnist数据装载器

class Fashion_MNISTLoader():

def __init__(self):

(self.train_data, self.train_label), (self.test_data,

self.test_label) = load_fashion_mnist()

self.train_data = np.expand_dims(

self.train_data.astype(np.float32) / 255.0, axis=-1)

self.test_data = np.expand_dims(

self.test_data.astype(np.float32) / 255.0, axis=-1)

self.train_label = self.train_label.astype(np.int32)

self.test_label = self.test_label.astype(np.int32)

self.num_train_data, self.num_test_data = self.train_data.shape[

0], self.test_data.shape[0]

def get_batch(self, batch_size):

# 从数据集中随机取出batch_size个元素并返回

index = np.random.randint(0, self.num_train_data, batch_size)

return self.train_data[index, :], self.train_label[index]

定义HWDB1数据装载器

class HWDB1Loader():

def __init__(self):

(self.train_data, self.train_label), (self.test_data,

self.test_label) = load_HWDB1()

self.train_label = self.train_label.astype(np.int32)

self.test_label = self.test_label.astype(np.int32)

self.num_train_data, self.num_test_data = self.train_data.shape[

0], self.test_data.shape[0]

def get_batch(self, batch_size):

# 从数据集中随机取出batch_size个元素并返回

index = np.random.randint(0, self.num_train_data, batch_size)

return self.train_data[index, :], self.train_label[index]

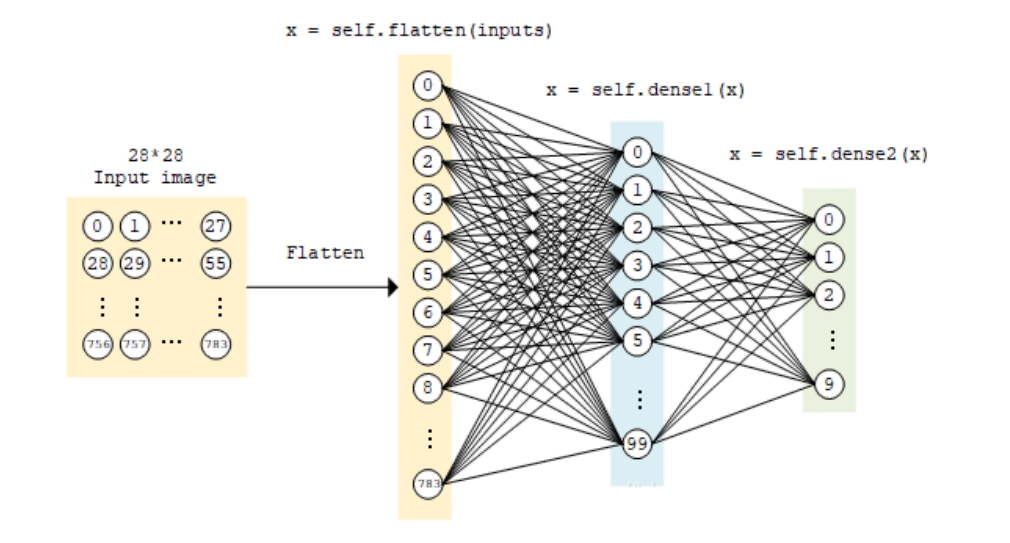

定义多层感知机网络结构

两个全连接层

class MLP(tf.keras.Model):

def __init__(self):

super().__init__()

self.flatten = tf.keras.layers.Flatten() # Flatten层将除第一维(batch_size)以外的维度展平

self.dense1 = tf.keras.layers.Dense(units=100, activation=tf.nn.relu)

self.dense2 = tf.keras.layers.Dense(units=10)

def call(self, inputs): # [batch_size, 28, 28, 1]

x = self.flatten(inputs) # [batch_size, 784]

x = self.dense1(x) # [batch_size, 100]

x = self.dense2(x) # [batch_size, 10]

output = tf.nn.softmax(x)

return

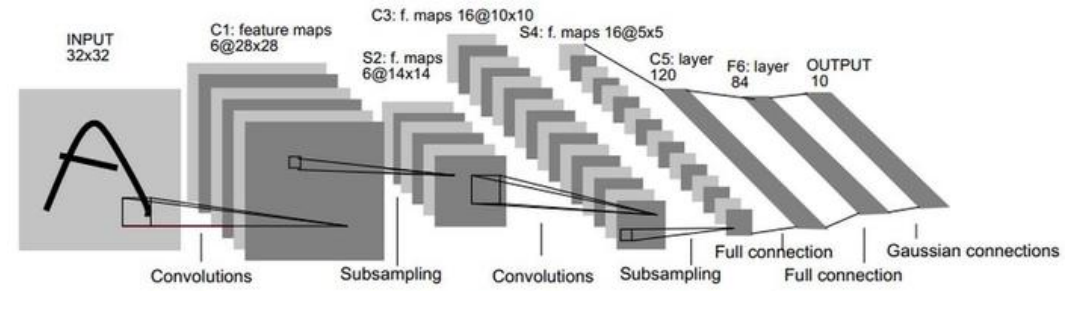

定义LeNet网络结构

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, MaxPool2D, Dropout, Flatten, Dense

from tensorflow.keras import Model

class LeNet(Model):

def __init__(self):

super(LeNet, self).__init__()

# 原LeNet输入是 32x32,这个Mnist数据输入是28x28

self.c1 = Conv2D(filters=6, kernel_size=(5, 5), activation='sigmoid')

self.p1 = MaxPool2D(pool_size=(2, 2), strides=2)

self.c2 = Conv2D(filters=16, kernel_size=(5, 5),activation='sigmoid')

self.p2 = MaxPool2D(pool_size=(2, 2), strides=2)

self.flatten = Flatten()

self.f1 = Dense(120, activation='sigmoid')

self.f2 = Dense(84, activation='sigmoid')

self.f3 = Dense(10, activation='softmax')

# 前向传播

def call(self, inputs): # [batch_size, 28, 28, 1]

x = self.c1(inputs) # [batch_size, 24, 24, 6] 卷积前后计算公式:输出大小=[(输入大小-卷积核大小+2*Padding)/步长]+1

x = self.p1(x) # [batch_size, 12, 12, 6]

x = self.c2(x) # [batch_size, 8, 8, 16]

x = self.p2(x) # [batch_size, 4, 4, 16]

x = self.flatten(x) # [batch_size, 256]

x = self.f1(x) # [batch_size, 120]

x = self.f2(x) # [batch_size, 84]

y = self.f3(x) # [batch_size, 10]

return

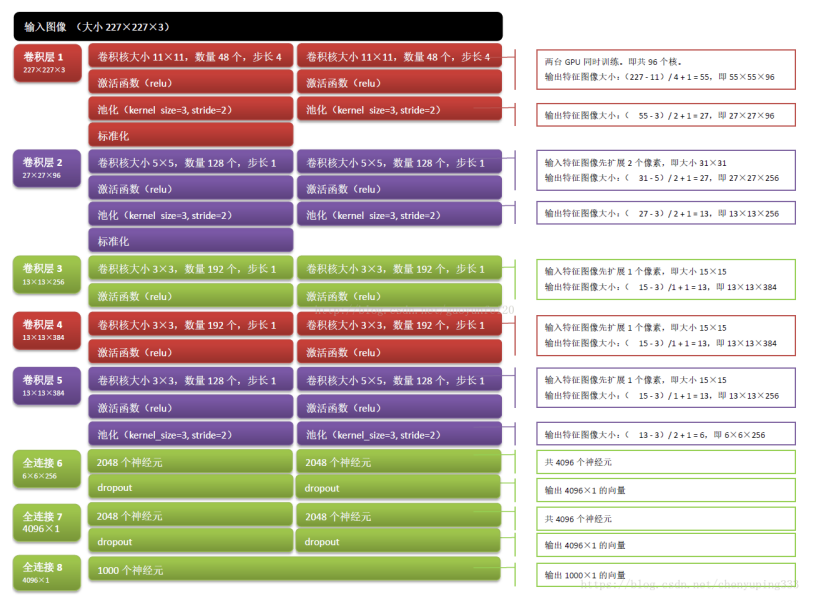

定义AlexNet网络结构

AlexNet创新点:

1.激活函数使用relu

2.卷积之后引入标准化层(BN层)

3.使用了Dropout防止过拟合

from tensorflow.keras import layers, models, Model, Sequential

class AlexNet(Model):

def __init__(self):

super(AlexNet, self).__init__()

# 依次建立卷积层/标准化层/激活层/池化层,一共8层

# 96通道,卷积核改成3x3(原始是11x11)

self.c1 = Conv2D(filters=96, kernel_size=(3, 3))

self.b1 = BatchNormalization()

# ReLu激活函数

self.a1 = Activation('relu')

# 最大池化3*3池化层,步长为2

self.p1 = MaxPool2D(pool_size=(3, 3), strides=2)

self.c2 = Conv2D(filters=256, kernel_size=(3, 3))

self.b2 = BatchNormalization()

self.a2 = Activation('relu')

self.p2 = MaxPool2D(pool_size=(3, 3), strides=2)

# 384个通道,3*3卷积核,使用全零填充,激活函数为ReLu

self.c3 = Conv2D(filters=384, kernel_size=(3, 3), padding='same', activation='relu')

self.c4 = Conv2D(filters=384, kernel_size=(3, 3), padding='same', activation='relu')

self.c5 = Conv2D(filters=256, kernel_size=(3, 3), padding='same', activation='relu')

self.p3 = MaxPool2D(pool_size=(3, 3), strides=2)

# 将像素拉直

self.flatten = Flatten()

# 设置全连接层2048个神经元,激活函数为ReLu

self.f1 = Dense(2048, activation='relu')

# 舍弃50%的神经元

self.d1 = Dropout(0.5)

self.f2 = Dense(2048, activation='relu')

self.d2 = Dropout(0.5)

# 全连接层,神经元为10,采用softmax激活函数

self.f3 = Dense(10, activation='softmax')

# 前向传播

def call(self, inputs): # [batch_size, 28, 28, 1]

x = self.c1(inputs) # [batch_size, 26, 26, 96] 卷积前后计算公式:输出大小=[(输入大小-卷积核大小+2*Padding)/步长]+1

x = self.b1(x) # [batch_size, 26, 26, 96]

x = self.a1(x) # [batch_size, 26, 26, 96]

x = self.p1(x) # [batch_size, 13, 13, 96] 池化3x3,步长为2 (26-3)/2+1=13

x = self.c2(x) # [batch_size, 11, 11, 256] 13-3+1=11

x = self.b2(x) # [batch_size, 11, 11, 256]

x = self.a2(x) # [batch_size, 11, 11, 256]

x = self.p2(x) # [batch_size, 5, 5, 256] (11-3)/2+1=5

x = self.c3(x) # [batch_size, 5, 5, 384] padding=same 输出大小=[输入/步长]

x = self.c4(x) # [batch_size, 5, 5, 384]

x = self.c5(x) # [batch_size, 5, 5, 256]

x = self.p3(x) # [batch_size, 2, 2, 256] (5-3)/2+1=2

x = self.flatten(x) # [batch_size, 1024]

x = self.f1(x) # [batch_size, 2048]

x = self.d1(x) # [batch_size, 2048]

x = self.f2(x) # [batch_size, 2048]

x = self.d2(x) # [batch_size, 2048]

y = self.f3(x) # [batch_size, 10]

return

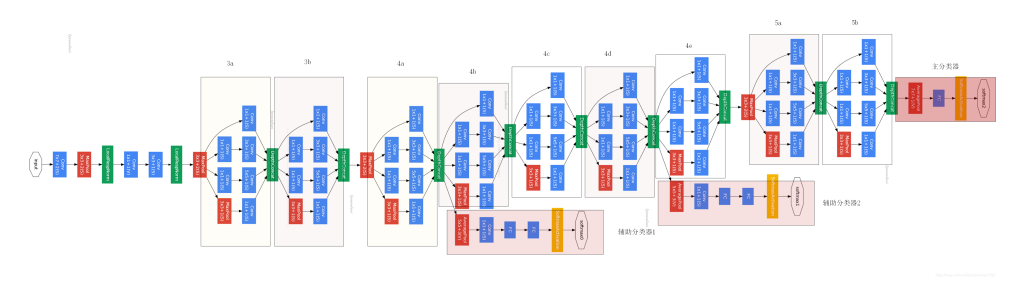

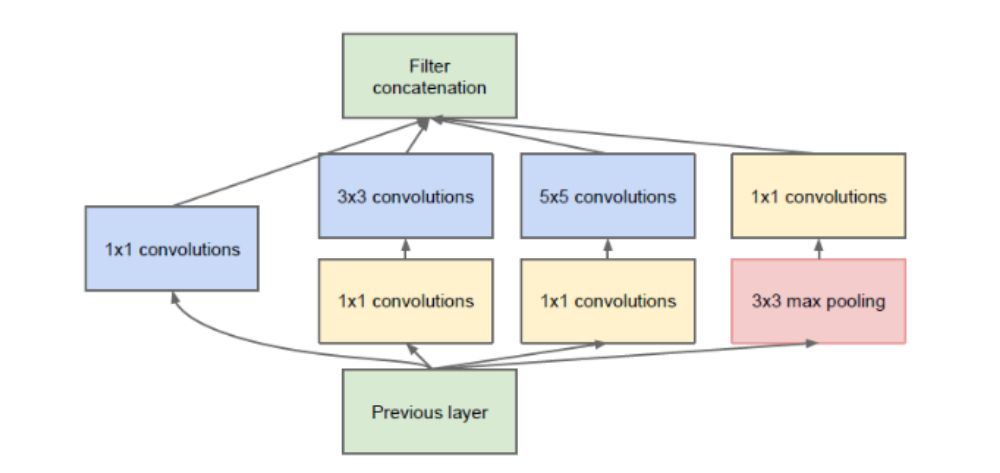

定义GoogLeNet网络结构

GoogLeNet-Inception 共四个版本,这里选择了v3

inception模块结构图:

GoogLeNet创新点:

v1:引入了Inception结构,并使用1x1卷积和来压缩通道数(减少参数量。Inception作用:代替人工确定卷积层中的过滤器类型或者确定是否需要创建卷积层和池化层,即:不需要人为的决定使用哪个过滤器,是否需要池化层等,由网络自行决定这些参数,可以给网络添加所有可能值,将输出连接起来,网络自己学习它需要什么样的参数。

v2:引入BN层,BN作用:加速网络训练/防止梯度消失。

v3:(1)将Inception内部的BN层推广到外部。(2)优化了网络结构,将较大的二维卷积拆成两个较小的一维卷积,比如将3x3拆成1x3和3x1。这样节省了大量参数,加速运算,并增加了一层非线性表达能力。

v4:引入残差结构。

from tensorflow.keras import layers, models, Model, Sequential

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, MaxPool2D, Dropout, Flatten, Dense, ReLU, GlobalAveragePooling2D

# 构建单个卷积组

class ConvBNRelu(Model):

# 默认卷积核边长是3,步长为1,使用全零填充

def __init__(self, ch, kernelsz=3, strides=1, padding='same'):

super(ConvBNRelu, self).__init__()

# 设置sequence

self.model = tf.keras.models.Sequential([

# 卷积核个数/卷积核尺寸/卷积步长/是否全零填充

Conv2D(ch, kernelsz, strides=strides, padding=padding),

BatchNormalization(), # 标准化BN层

Activation('relu') # ReLU激活函数

])

def call(self, x):

# 在training=False时,BN通过整个训练集计算均值、方差去做批归一化,training=True时,通过当前batch的均值、方差去做批归一化。

x = self.model(x, training=False)

return x

# 构建单个inception模块

class InceptionBlk(Model):

def __init__(self, ch, strides=1):

super(InceptionBlk, self).__init__()

self.ch = ch

self.strides = strides

# 设置各卷积的内容,按照inception的结构依次设置

self.c1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c2_1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c2_2 = ConvBNRelu(ch, kernelsz=3, strides=1)

self.c3_1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c3_2 = ConvBNRelu(ch, kernelsz=5, strides=1)

# 3*3卷积核,步长为1,全零填充

self.p4_1 = MaxPool2D(3, strides=1, padding='same')

self.c4_2 = ConvBNRelu(ch, kernelsz=1, strides=strides)

# 前向传播

def call(self, x):

x1 = self.c1(x)

x2_1 = self.c2_1(x)

x2_2 = self.c2_2(x2_1)

x3_1 = self.c3_1(x)

x3_2 = self.c3_2(x3_1)

x4_1 = self.p4_1(x)

x4_2 = self.c4_2(x4_1)

# 将4个部分叠加起来,深度为3

x = tf.concat([x1, x2_2, x3_2, x4_2], axis=3)

return x

class Inception(Model):

# 设置默认ch=16,就是16个卷积核

def __init__(self, num_blocks, num_classes, init_ch=16, **kwargs):

super(Inception, self).__init__(**kwargs)

self.in_channels = init_ch

self.out_channels = init_ch

self.num_blocks = num_blocks

self.init_ch = init_ch

# 直接为初始化中的值(3*3卷积核,步长为1)

self.c1 = ConvBNRelu(init_ch)

# 调用定义的sequence

self.blocks = tf.keras.models.Sequential()

# 后面调用时,num_blocks=2,即有2个block

for block_id in range(num_blocks):

for layer_id in range(2):

if layer_id == 0:

# 第1层,执行inception block,步长为2

block = InceptionBlk(self.out_channels, strides=2)

else:

# 第2层,执行inception block,步长为1

block = InceptionBlk(self.out_channels, strides=1)

self.blocks.add(block)

# enlarger out_channels per block

# 因为步长不一样,所以深度加深,保证输出特征抽取中信息的承载量一致

self.out_channels *= 2

# 最终经过inception后变为128个通道的数据,送入平均池化

# 平均池化层

self.p1 = GlobalAveragePooling2D()

# num_classes为分类数量

self.f1 = Dense(num_classes, activation='softmax')

def call(self, x):

# 执行4个结构

x = self.c1(x)

x = self.blocks(x)

x = self.p1(x)

y = self.f1(x)

return

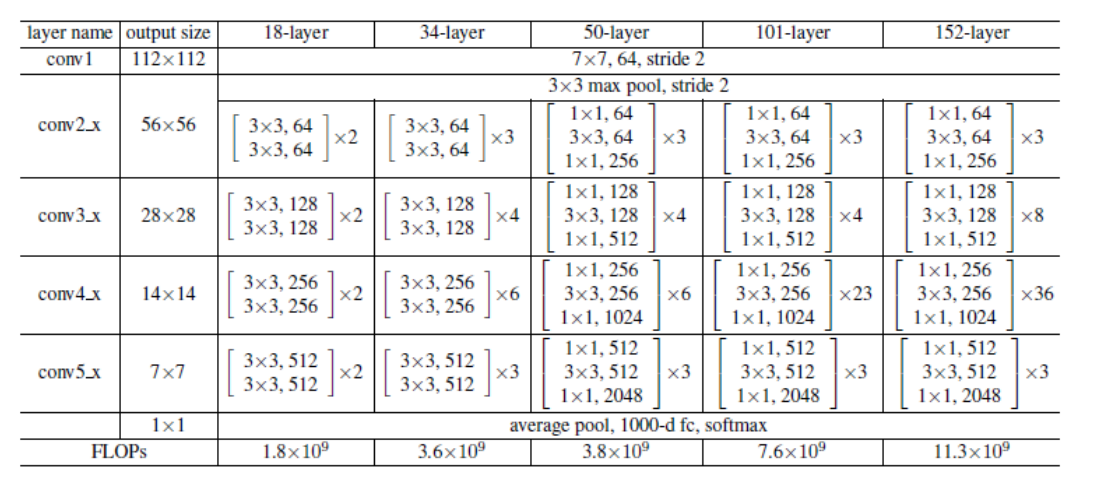

定义ResNet网络结构

这里选择了ResNet18

class ResnetBlock(Model):

def __init__(self, filters, strides=1, residual_path=False):

super(ResnetBlock, self).__init__()

self.filters = filters

self.strides = strides

self.residual_path = residual_path

self.c1 = Conv2D(filters, (3, 3), strides=strides, padding='same', use_bias=False)

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.c2 = Conv2D(filters, (3, 3), strides=1, padding='same', use_bias=False)

self.b2 = BatchNormalization()

# residual_path为True,对输入进行下采样,即用1*1的卷积核,保证x和F(x)维度相同,顺利相加。

if residual_path:

self.down_c1 = Conv2D(filters, (1, 1), strides=strides, padding='same', use_bias=False)

self.down_b1 = BatchNormalization()

self.a2 = Activation('relu')

def call(self, inputs):

residual = inputs # residual等于输入本身, 即residual=x

# 将输入通过卷积、BN层、激活层、计算F(x)

x = self.c1(inputs)

x = self.b1(x)

x = self.a1(x)

x = self.c2(x)

y = self.b2(x)

if self.residual_path:

residual = self.down_c1(inputs)

residual = self.down_b1(residual)

out = self.a2(y + residual) # 最后输出的是两部分的和,即F(x)或F(x)+Wx,再使用relu激活函数

return out

class ResNet18(Model):

def __init__(self, block_list, initial_filters=64): # block_list表示每个block有几个卷积层

super(ResNet18, self).__init__()

self.num_block = len(block_list) # 共有几个block

self.block_list = block_list

self.out_filters = initial_filters

# 原论文输入是224*224,3通道的图像,采用7*7的卷积核,这里对参数进行修改

self.c1 = Conv2D(self.out_filters, (3, 3), strides=1, padding='same', use_bias=False)

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.blocks = tf.keras.Sequential()

# 构建ResNet网络结构

# 后面调用为[2,2,2,2],即总共四个resnetblock,每个block有2个卷积层

for block_id in range(len(block_list)): # 第几个resnetblock

for layer_id in range(block_list[block_id]): # 这个block有几个卷积层

if block_id != 0 and layer_id == 0: # 对除第一个block以外每个block的输入进行下采样

block = ResnetBlock(self.out_filters, strides=2, residual_path=True)

else:

block = ResnetBlock(self.out_filters, residual_path=False)

self.blocks.add(block)

# self.out_filters *= 2 # 下一个block的卷积核数为2倍

self.p1 = tf.keras.layers.GlobalAveragePooling2D()

self.f1 = tf.keras.layers.Dense(

10, activation='softmax', kernel_regularizer=tf.keras.regularizers.l2())

def call(self, inputs):

x = self.c1(inputs)

x = self.b1(x)

x = self.a1(x)

x = self.blocks(x)

x = self.p1(x)

y = self.f1(x)

return

训练——MLP-Mnist

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = MLP()

data_loader = MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

测试——MLP-Mnist

准确率:97.76%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.977600

训练——MLP-Fashion_MNIST

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = MLP()

data_loader = Fashion_MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

print("epoch %d" % (e + 1))

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

print("batch %d: loss %f" % (batch_index, loss.numpy()))

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

测试——MLP-Fashion_MNIST

准确率:87.73%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.877300

训练——MLP-HWDB1

进行100轮训练

num_epochs = 100

batch_size = 50

learning_rate = 0.001

model = MLP()

data_loader = HWDB1Loader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

测试——MLP-HWDB1

准确率:84.17%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.841667

训练——LeNet-Mnist

训练10轮

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = LeNet()

data_loader = MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

测试——LeNet-Mnist

准确率:98.68%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.986800

训练——LeNet-Fashion_MNIST

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = LeNet()

data_loader = Fashion_MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

测试——LeNet-Fashion_MNIST

准确率:85.82%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.858200

训练——LeNet-HWDB1

进行100轮训练

num_epochs = 100

batch_size = 50

learning_rate = 0.001

model = LeNet()

data_loader = HWDB1Loader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

测试——LeNet-HWDB1

准确率:91.33%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.913333

训练——AlexNet-Mnist

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = AlexNet()

data_loader = MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 0.067972

epoch 2: loss 0.008088

epoch 3: loss 0.002195

epoch 4: loss 0.000097

epoch 5: loss 0.008648

epoch 6: loss 0.002917

epoch 7: loss 0.024030

epoch 8: loss 0.000092

epoch 9: loss 0.003540

epoch 10: loss 0.003601

测试——AlexNet-Mnist

准确率:98.91%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.989100

训练——AlexNet-Fashion_MNIST

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = AlexNet()

data_loader = Fashion_MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 0.512883

epoch 2: loss 0.372691

epoch 3: loss 0.317794

epoch 4: loss 0.209880

epoch 5: loss 0.259238

epoch 6: loss 0.246677

epoch 7: loss 0.325049

epoch 8: loss 0.085606

epoch 9: loss 0.231111

epoch 10: loss 0.108106

测试——AlexNet-Fashion_MNIST

准确率:90.57%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.905700

训练——AlexNet-HWDB1

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = AlexNet()

data_loader = HWDB1Loader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 2.154262

epoch 2: loss 1.550519

epoch 3: loss 1.117614

epoch 4: loss 0.796395

epoch 5: loss 0.482055

epoch 6: loss 0.261059

epoch 7: loss 0.598217

epoch 8: loss 0.408028

epoch 9: loss 0.527755

epoch 10: loss 0.232926

测试——AlexNet-HWDB1

准确率:89.67%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.896667

训练——GoogLeNet-Mnist

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = Inception(num_blocks=2, num_classes=10)

data_loader = MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 0.226245

epoch 2: loss 0.046722

epoch 3: loss 0.043534

epoch 4: loss 0.005478

epoch 5: loss 0.005200

epoch 6: loss 0.020319

epoch 7: loss 0.087346

epoch 8: loss 0.008595

epoch 9: loss 0.035517

epoch 10: loss 0.025345

测试——GoogLeNet-Mnist

准确率:99.27%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.992700

训练——GoogLeNet-Fashion_MNIST

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = Inception(num_blocks=2, num_classes=10)

data_loader = Fashion_MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 0.555443

epoch 2: loss 0.369227

epoch 3: loss 0.424934

epoch 4: loss 0.332159

epoch 5: loss 0.254867

epoch 6: loss 0.216396

epoch 7: loss 0.330004

epoch 8: loss 0.130716

epoch 9: loss 0.225775

epoch 10: loss 0.072902

测试——GoogLeNet-Fashion_MNIST

准确率:90.27%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.902700

训练——GoogLeNet-HWDB1

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = Inception(num_blocks=2, num_classes=10)

data_loader = HWDB1Loader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 2.175095

epoch 2: loss 1.401912

epoch 3: loss 1.073104

epoch 4: loss 0.582406

epoch 5: loss 0.561393

epoch 6: loss 0.342880

epoch 7: loss 0.225490

epoch 8: loss 0.534456

epoch 9: loss 0.142884

epoch 10: loss 0.083469

测试——GoogLeNet-HWDB1

准确率:91.50%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.915000

训练——ResNet-Mnist

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = ResNet18([2, 2, 2, 2])

data_loader = MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 0.009949

epoch 2: loss 0.026236

epoch 3: loss 0.016156

epoch 4: loss 0.000139

epoch 5: loss 0.003694

epoch 6: loss 0.004449

epoch 7: loss 0.001600

epoch 8: loss 0.001913

epoch 9: loss 0.001122

epoch 10: loss 0.005298

测试——ResNet-Mnist

准确率:99.21%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.992100

训练——ResNet-Fashion_MNIST

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = ResNet18([2, 2, 2, 2])

data_loader = Fashion_MNISTLoader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

print("epoch %d" % (e + 1))

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

print("batch %d: loss %f" % (batch_index, loss.numpy()))

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

测试——ResNet-Fashion_MNIST

准确率:91.35%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.913500

训练——ResNet-HWDB1

进行10轮训练

num_epochs = 10

batch_size = 50

learning_rate = 0.001

model = ResNet18([2, 2, 2, 2])

data_loader = HWDB1Loader()

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)

num_batches = int(data_loader.num_train_data // batch_size)

for e in range(num_epochs):

for batch_index in range(num_batches):

X, y = data_loader.get_batch(batch_size)

with tf.GradientTape() as tape:

y_pred = model(X)

loss = tf.keras.losses.sparse_categorical_crossentropy(

y_true=y, y_pred=y_pred)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.variables)

optimizer.apply_gradients(grads_and_vars=zip(grads, model.variables))

print("epoch %d: loss %f" % (e + 1, loss.numpy()))

epoch 1: loss 2.286184

epoch 2: loss 1.170036

epoch 3: loss 0.718102

epoch 4: loss 0.766253

epoch 5: loss 0.271660

epoch 6: loss 0.121803

epoch 7: loss 0.105720

epoch 8: loss 0.029223

epoch 9: loss 0.422725

epoch 10: loss 0.005966

测试——ResNet-HWDB1

准确率:93.67%

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()

num_batches = int(data_loader.num_test_data // batch_size)

for batch_index in range(num_batches):

start_index, end_index = batch_index * \

batch_size, (batch_index + 1) * batch_size

y_pred = model.predict(data_loader.test_data[start_index: end_index])

sparse_categorical_accuracy.update_state(

y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)

print("test accuracy: %f" % sparse_categorical_accuracy.result())

test accuracy: 0.936667